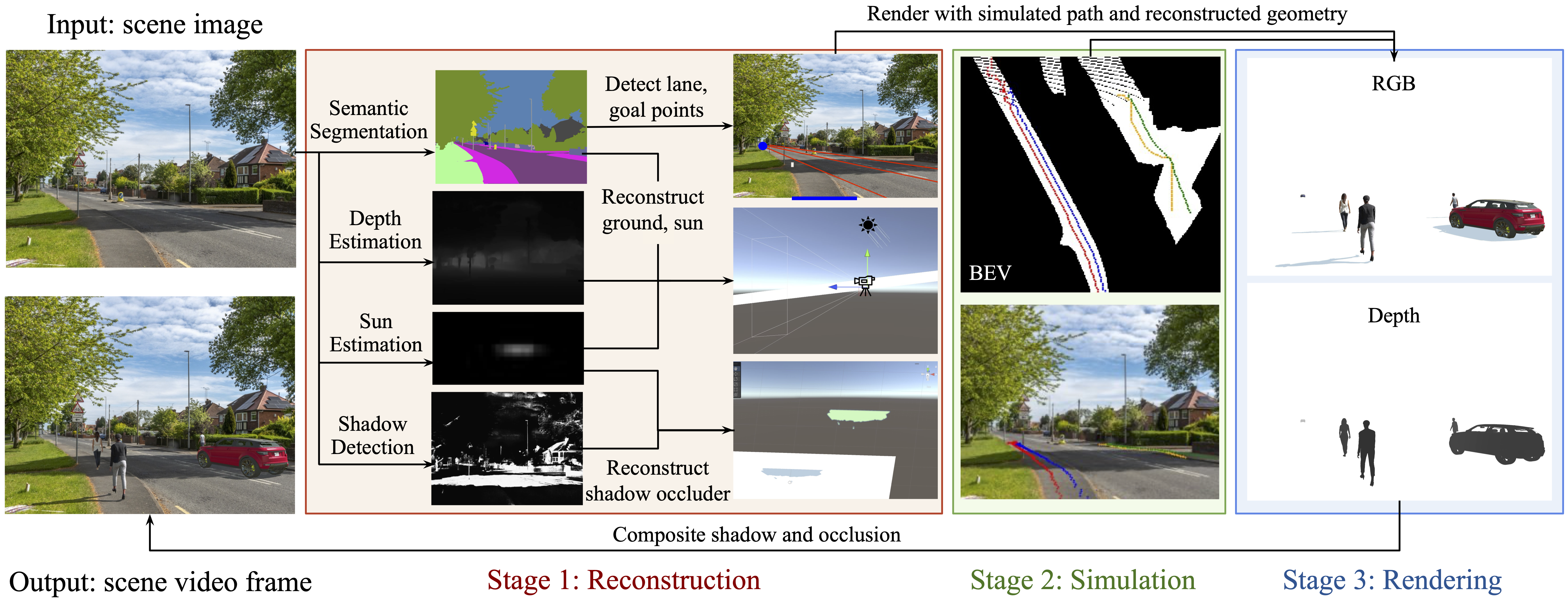

System Description

Our system has three major components. In Stage 1, we reason about the scene by predicting its semantic segmentation labels, depth values, sun direction and intensity, as well as shadow regions. We additionally determine walking and driving regions for adding pedestrians and cars (red straight lines: lane detection; blue points: origin and destination points). In Stage 2, we simulate the pedestrians in a 2D bird's eye view representation (BEV) of the scene, and simulate car movements with predicted lanes (four colors correspond to four predicted path, both in BEV and scene images). If there is a detected crosswalk, we also simulate the traffic behavior by controlling a traffic light. In Stage 3, we render the scene with the estimated lighting, shadows, and occlusions. The whole pipeline is automated.